Security Overview

This section provides a high-level overview of security for Solace event brokers, including:

- the goals for achieving secure systems—restricting data access, preventing data loss or damage, role separation, and establishing audit trails

- how these goals can be achieved through secure system capabilities such as authentication, authorization, auditing, and encryption

- considerations when securing the event broker's operating environment

To determine whether your system complies with Solace best practices, contact our Professional Services Group to arrange a security evaluation.

To obfuscate passwords, Solace recommends setting up the admin password and redundancy pre-shared keys using file paths, which allow you to configure these passwords using secrets, or the encryptedpassword configuration key. For more information about using file paths, see Configuring Secrets.

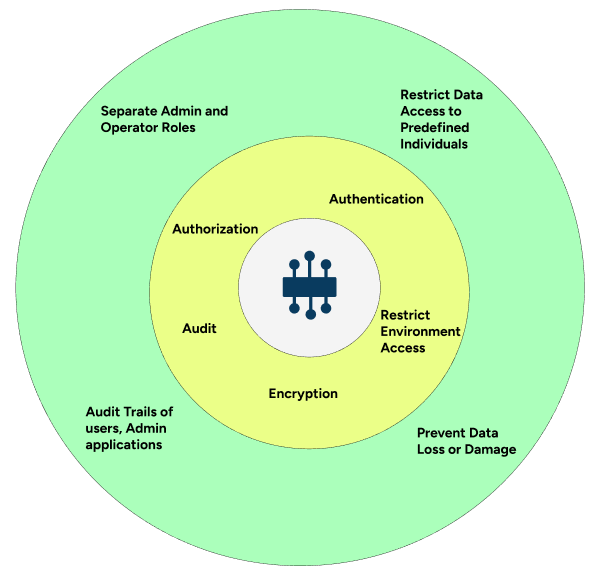

Goals for a Secure System

There are many descriptions of secure system, from Trusted Computer System Evaluation Criteria (TCSEC) of the US Department of Defense to modern PCI, HIPAA, or SOC-2 compliance requirements. Despite the differences, all these descriptions can be reduced to the following secure system goals:

- Restricting data access to users with predefined privileges and security level access.

Every user accessing the system must be correctly identified and matched to predefined security policies. As well, every piece of data and infrastructure must be guarded with matching security policies.

- Preventing malicious access or accidental loss of or damage to data.

This includes restricting access, as previously mentioned, as well as protecting data from loss or unauthorized access both while it is being transmitted and while it is at rest. Loss prevention also requires the ability to recover data in the event of localized disasters, such as earthquakes, fires, or floods. In addition, any remotely stored data must have the same level of secure access as the original copies.

- Separating access roles.

System operators must have distinct roles from users and applications that require data access.

- Auditing the activities of users, applications, and administrators.

To make sure it authenticates and authorizes the right individuals, the system needs to be self-monitoring and log information about both malicious activity (such as brute force password attacks) and expected activity (data processing and configuration changes).

Capabilities for Meeting Security Requirements

To achieve the security goals discussed above, a system must be able to:

- Authenticate each user and administrator accessing the system.

- Authorize the access to data and system settings against policies that restrict that access to predefined sets of activities, both for administrators and users.

- Audit the access for administrators and users, including the data they accessed and the changes they made.

- Encrypt data both in motion and at rest and ensure encryption keys are disseminated only to authorized entities.

- Monitor and restrict environmental changes and have processes in place to authorize system-level changes.

Authentication

Authentication is a foundational technology in modern messaging systems; authentication mechanisms are built into most messaging protocols, with username and password combinations standard in their wireline headers. However, a secure system must have authentication checks that detect and prevent incorrect identification. Various mechanisms can be used to achieve this, including passwords, identification tokens, or security certificates, all of which are supported by Solace event brokers. The authentication mechanism for Solace event brokers can be as basic as an internal database configured with usernames and passwords, or a more robust mechanism using LDAP, RADIUS, Kerberos, Client Certificates, or OAuth Open ID Connect.

Authentication in Wireline

(SMF) protocol and Solace supported open-source messaging protocols all support a "connect" message as the first message of a client session. This connect message contains the username and authorization credentials. If the event broker receives a connect message with valid credentials, then an authenticated bi-directional session is established, and messages can flow in either direction. If the event broker does not receive a connect message with valid credentials, it disconnects the session. For REST producers, there is no authenticated session; therefore, the username and credentials must be sent with each message.

Basic Authentication

A basic authentication scheme allows a connecting client to authenticate with an event broker by providing a valid client username and password as its credentials. Basic authentication using an internal database with username and password is suitable for small deployments or testing, but it has potential limitations. Managing many usernames and passwords in an event broker configuration is difficult and error-prone, especially over a fleet of brokers. Event brokers can perform basic authentication using look-up validation against LDAP (Active Directory) or RADIUS services, thereby eliminating the need to manage passwords internally. However, this solution requires the end application or user to store or remember the password for authenticating.

Event brokers also support fallback authentication mechanisms that allow checking credentials in multiple locations. For example, you can configure the event broker to first check the internal database, and if the username is not found or does not have a password configured, then fall back to LDAP authentication. This is particularly useful in environments where human user credentials are stored in LDAP while application credentials are managed locally on the event broker.

Single sign-on (SSO) with Kerberos, certificate authentication with a client certificates, or token-based authentication with OAuth Open ID Connect are all options that solve the username/password retention problem on the client side.

Certificate Authentication and Revocation Checking

For most organization, having a secure tool to manage the lifecycle of usernames and passwords is a hard requirement. Some common options are Active Directory, a cloud identity service, or client certificates. If a client connecting via TLS provides an X.509 certificate, the messaging system can validate the client's identity using the common name (CN) and encrypted signature. In addition, although certificates are issued and managed outside the messaging system, the messaging system itself can perform certificate revocation checking. Revocation checking allows a central administrator the ability to deny access based on revoked certificates, thereby maintaining positive control over authentication.

For details on how to use client certificates, see Client Certificate Authentication.

To learn how revocation checking is performed in Solace event brokers, see Configuring OCSP-CRL Certificate Revocation Checking .

Authenticating Administrators

Just as the messaging protocol users must be authenticated, so should system administrators. All the techniques mentioned above can be applied to managing and securing your administrator users. It might seem simplest to have a common username and password for all administrators and administrative tasks, but this makes it impossible to know exactly who changing the system and why. For a more secure system:

- Use a distinct single sign-on for each administrator so that every change can be linked to a specific person.

- Have a change management system in place so that every system update is initiated through a change request that provides detailed information about the reason for the change.

For more information about event broker authentication, see Client Authentication and User Authentication and Authorization.

Authorization

After (or in conjunction with) authentication, the user must be authorized to access the system's data and resources. Your configuration must handle authorizing users at many levels. The following sections discuss some of the common authorization requirements and how Solace event brokers handle these requirements.

If you would like to read about the event broker's authorization process, you can find that in the Client Authorization.

Authorization to Connect

External users can connect to the broker only via a firewall or a load balancer. In many cases, these intermediate devices re-write the source IP address, making it difficult or impossible to determine the original source address. To solve this problem, the system should be configured so that the firewall or load balancer accepts external connections only from trusted address ranges and event brokers accept external connections only from the firewall or load balancer addresses. This configuration prevents bypassing the firewall as a vector of attack. With event brokers, you can control which clients can connect to your event broker using Access Control Lists (ACLs).

It might also be worth considering limiting the maximum number of connections for external users to one or two to prevent compromised usernames from making thousands of connections and consuming all the broker resources. For more information, see Configuring Max Connections Per Username.

Authorization to Consume Resources

You can limit the resources each group of users can consume. Limits you can enforce include:

- the number of client egress queues groups can access

- the ability of groups to read or write persistent messages

Take a look at Configuring Client Profiles to learn how you can apply common configurations to groups of clients.

Authorization to Access VPN-Level Resources

Each client connection is associated with a single Message VPN. Within each VPN, a topic namespace limits the dissemination of data across the broker, as well as the system resources clients can consume. This segregation of data is useful in multi-tenant situations. For more information, see Message VPNs.

Authorization to Send and Receive Data

You can place restrictions on the addressable data each authenticated user can produce and consume. Using ACLs, you can control the topics to which clients are allowed to publish or subscribe.

For more information about configuring ACLs, see Controlling what clients can publish to and Controlling what topics a client can subscribe to.

You can also individually configure each queue to modify:

- which clients can publish messages to the queue

- which clients can read messages from the queue

- which messages the queue attracts

Refer to Endpoint Permissions and Access Control for details about configuring endpoint permissions.

There are environments where real-time data access control and monitoring (auditing capability) is required. This is beyond the capability of relatively static permission lists. In these situations, you can offload subscription control to an on-behalf-of subscription manager that contains specific business logic to restrict data access.

For more information about event broker managed subscriptions, see Managing Topic Subscriptions on Behalf of Other Clients in this documentation and An Architectural Look at Managed Subscriptions in Solace in the Solace Blog.

Authorization to Change System and VPN-Level Configuration

You can limit the access of your administrator users to read and write specific VPN configurations and system-level configurations. As previously discussed, it is a best practice to provision individual administrative accounts to enable auditing of configuration changes.

For further information on managing your event broker administrator users, see User Authentication and Authorization and CLI User Access Levels.

Audit

With correctly authenticated and authorized users and administrators, you have a system that provides access to only those individuals who have the correct predefined privileges. However, to ensure that the system authenticates and authorizes the right individuals, the system must be self-monitoring and log events such as brute force password attacks as well as expected accesses to data and configuration changes. Logging this information is not enough; it needs to be expertly analyzed to gain insights into whether the system is operating in a secure manner.

See Displaying and Clearing Logs for general information about Solace event broker logs.

Auditing Configuration Changes

Each configuration change can be logged on a command-by-command basis, irrespective of whether the change was done using the CLI or through one of the programmatic interfaces (SEMPv1 or SEMPv2). As previously discussed, to ensure auditing capability, each administrator or change management request should have its own login account.

See Monitoring Events Using Syslog for details about the command log format.

Auditing Data Access

The event broker logs show every client connection and disconnection event, including the queues the client has bound to and the number of messages they have received. It is also possible to log every topic subscribe and unsubscribe event, but doing this impacts the performance of subscription changes. Therefore, we recommend receiving this information over the messaging interface and not through logs or Syslog.

To learn more about logging events generated by the broker itself, see the following:

- Subscribing to Message Bus Events

- Subscribe Event Topics

- CLIENT_CLIENT_CONNECT

- CLIENT_CLIENT_BIND_SUCCESS

- CLIENT_CLIENT_DISCONNECT

Encryption

Encryption prevents unauthorized data access (both intentional and unintentional) and ensures data integrity. If an unauthorized individual gets access to your data during transfer, not only they can steal it, but they can also manipulate it to force your system to achieve their desired outcome.

Encryption in Closed Systems

In closed systems, where the producers and consumers of data are managed by one entity, and the security requirements are homogeneous across all producers and consumers, data should be encrypted as close as possible to the data origin and decrypted only during data consumption. The result would be an opaque encrypted byte stream flowing through the messaging system, with plain-text headers used for routing the data to the correct destinations and time-to-live behaviors. Application teams would manage the encryption keys.

Encryption in Open Systems

In a more open system, where it may not be practical for all consumers and producers to share the encryption keys or have the same level of security, encrypting data hop-by-hop in the messaging layer is an option. For example, in a system that has internal and external users, the external users could have their own encryption keys, and the internal users may not require encryption at all. The data could be decrypted at each broker hop and re-encrypted for next-hop peers that require encryption. While there is no need for end-to-end key sharing, and the producer application also cannot dictate that the encrypted data it has sent will remain encrypted throughout the system. System administrators would have to ensure that the authorization policies would prevent data transmission over an unencrypted link (if that is required).

This class of application requires encryption in the following areas:

- Encryption of the administrator connection

-

Securing the management interface is vital in creating a secure system. Event broker management interfaces are secured through the following implementation:

- Control plane access is secured by SSH

- Broker Manager is accessible over HTTPS

- SEMP / SEMPv2 is accessible over HTTPS

For further information, see TLS/SSL Encryption Configuration for SEMP Service.

- Network encryption between brokers and clients

-

The TLS / SSL Encryption and Monitoring TLS/SSL Configuration and Connections sections provide overviews of event broker network encryption. These discussions cover various TLS options, setting cipher suites and signature algorithms for each connection type, as well as information on server and client certificate revocation.

- Encryption of bridges and message routing

-

Event broker encryption ensures that data being transmitted across a wider network, even a global public network, is safe from unauthorized access and manipulation. Take a look at Configuring TLS/SSL and Configuring Client Certificate Authentication to learn about Message VPN bridge configuration for a secure system.

- Disaster recovery link encryption

-

Just like bridge encryption, disaster recovery (DR) link encryption, including encryption into the DR site, ensures denial of unauthorized access and manipulation of data across a wider network. To read more about the event broker's disaster recovery link encryption, see TLS/SSL Encryption Configuration for Replication Config-Sync Bridges.

- Encryption of disks and data at rest

-

Solace event brokers do not encrypt the disks directly but instead rely on the underlying infrastructure to encrypt data at rest.

We discussed the Solace event broker's security features and how they can be used to achieve secure systems. Now let's look at how you can secure the event broker itself. There are three main areas to consider when securing the event broker's operating environment:

Securing the Network

The network interfaces are usually the primary access points and points of attack from outside entities. If the event broker is servicing only internal applications and clients, then no specific network security consideration is warranted beyond corporate policy. However, in the following scenarios, you should consider additional network security:

- external clients (mobile or web clients) accessing internal services

- a hybrid or multi-cloud environment, where some work is offloaded to the public cloud and sensitive work is kept in private data centers

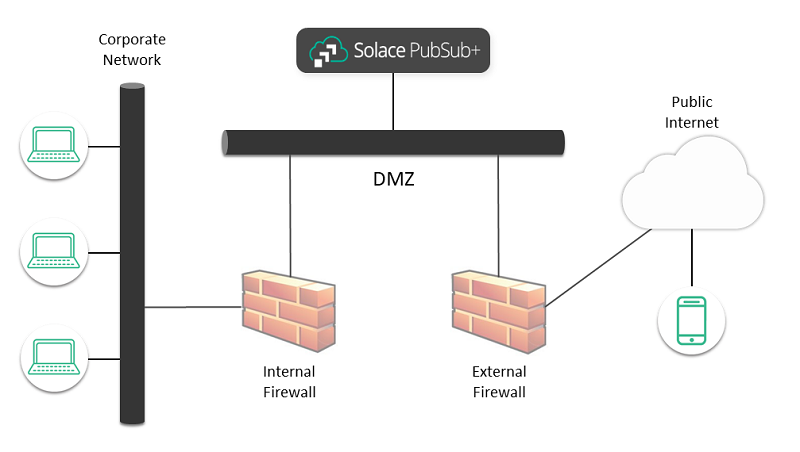

When external clients are allowed to access internal resources, a Defense in Depth (DiD) approach is standard practice. In this approach, defensive mechanisms are layered to protect valuable data and information. Typically, an external firewall handles threats such as SYN floods and other denial-of-service (DoS) attacks, and may also restrict incoming protocols and IP addresses. The traffic then moves to an internal firewall that protects internal services, for example using policies that limit externally initiated connections.

Internal hosts that do need to accept external connections sit between these two firewalls (that is, between the public internet and the corporate network) in a network commonly called a Demilitarized Zone (DMZ). Using an event broker in the DMZ allows external clients to connect to and access internal services without requiring those internal services to accept external connections, as shown in the diagram below. While it is possible to have separate network interfaces for internal- and external-facing traffic, it's not needed.

We recommend that you do not place the event broker directly on the public network; it should be placed behind the external firewalls.

Solace Appliance Event Brokers

A Solace Appliance Event Broker (appliance event broker) has hardened data interfaces with no standard OS receiving data and no TCP forwarding directly to backend applications. This has the advantage of limiting the effects of network attacks like SYN floods and reducing attack vectors for standard open ports and buffer overflow vulnerabilities. However, the broker is not a firewall; it does not have all the configuration and auditing capability that you would expect from a firewall.

The Solace Appliance Event Broker does not have a standard operating system (OS) in the data path for most messages; it has an OS on the control plane for appliance event broker management. This management OS is exposed through a separate network interface that can be restricted to a different trusted network.

Solace Software Event Brokers

The same model also applies when Solace Software Event Broker(software event brokers) are placed in a public cloud infrastructure. It is generally recommended that you place publicly accessible resources behind a firewall or a load balancer to mitigate DDoS attacks.For more information about additional recommendations, see AWS Best Practices for DDoS Resiliency.

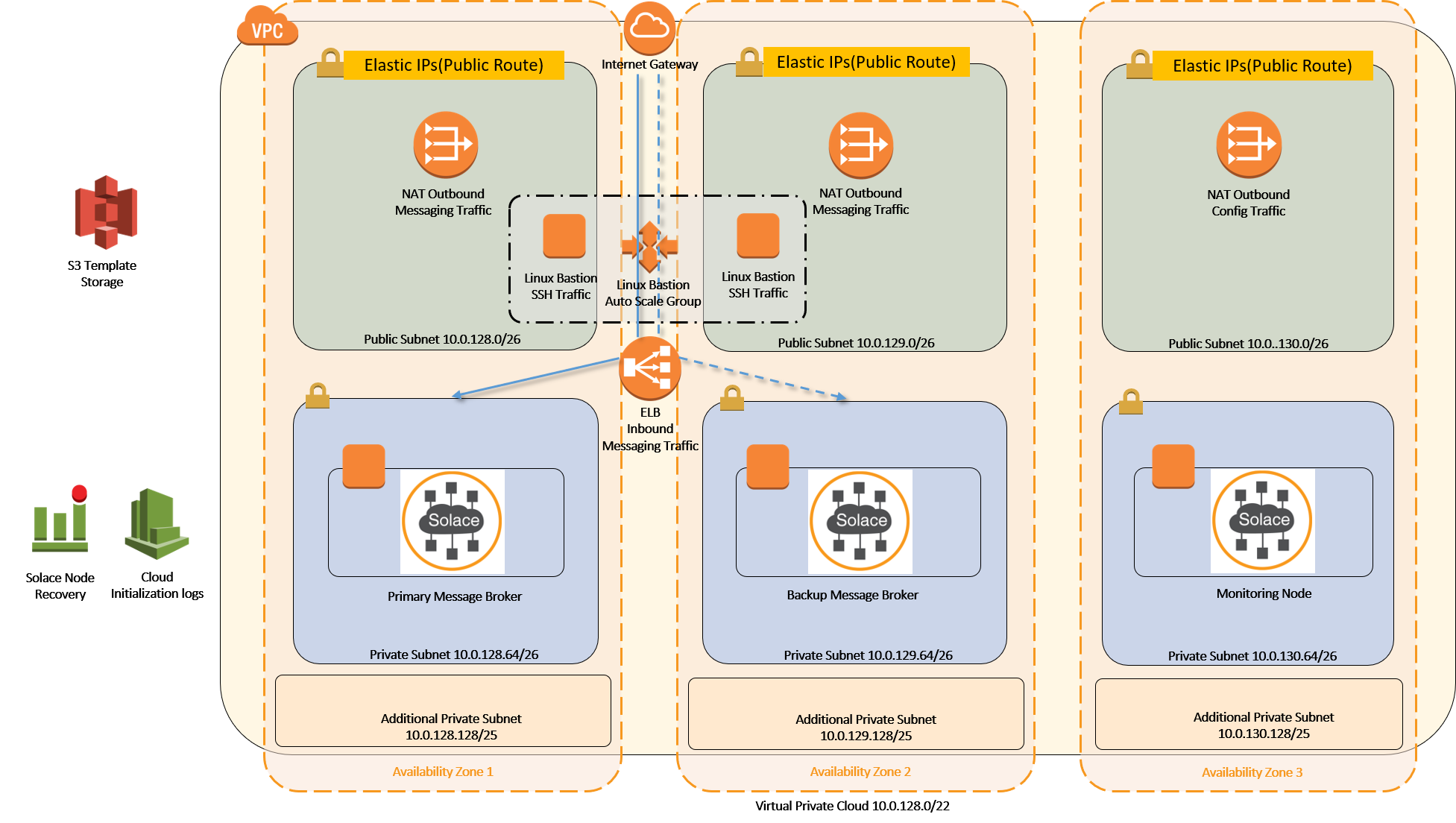

The diagram below illustrates a high-availability (HA) event broker set up in AWS public cloud infrastructure. The Internet Gateway at the top is the exterior connection to the Internet. Below the Internet Gateway is the DMZ layer that contains NATs, Bastion Hosts, and ELB. The DMZ layer prevents direct connections to and from underlying resources—in this case, the event brokers. The ELB acts as a load balancer/traffic director and a firewall, thus mitigating and preventing any denial of service and TCP level attacks. Below the DMZ layer are the Solace event brokers; they are protected by the upper layer and are not directly addressable from the Internet. The event brokers terminate the NAT’ed TCP connections, do authentication and authorization, and may terminate TLS connections. They do not directly forward IP or TCP traffic to applications but instead re-encapsulate data in internally addressed connections. The final layer at the bottom is where the applications sit. They are only reachable from the event brokers and possibly from the Bastion jump boxes.

To learn more about setting up this configuration in the AWS public cloud infrastructure, check out the quickstart in GitHub.

Solace Event Broker Services

For Solace Event Broker Service (event broker service) in Solace Cloud, all best practices for public cloud service placement are implemented. For more information, see Security in Solace Cloud and Solace Cloud security.

Securing the Host

Once the network is secure, the next thing to look at is the physical host or base OS, because tampering at this level can circumvent all other security measures.

Solace Appliance Event Brokers

As described above, the appliance event broker does not have a standard OS in the datapath for most messages, but they have an OS on the control plane for management. The host OS is limited to expose only the functions needed to perform its tasks, all non-essential processes and applications are removed, and a minimal set of ports are exposed. The host OS is rigorously patched for security vulnerability fixes and regularly tested for vulnerabilities.

Solace Software Event Brokers

The software event broker is packaged as a Linux container. The customer is responsible for all host OS hardening, patching, and testing. This task can be mitigated in cloud environments by using a base Linux OS from the cloud service provider.

Given that the software event broker is deployed as a Linux container, securing the broker centers around two activities: securing the container and deploying the container in a secure host environment. Once these activities are completed, sensitive information such as passwords and certificates must also be protected by bootstrapping the software event broker.

Securing the container

Many of the vulnerability patching and testing activities for appliance event broker are also carried out on the software event broker container. The container is developed based on the best practices outlined in the Center for Internet Security (CIS) guidelines and tested on each release with the derived benchmark. The software event broker performs self-checks to validate the integrity of critical system files, which detects corruption and may detect tampering.

Deploying the Container in a Secure Host Environment

Avoid using elevated privileges within a container. If a root user can break out of the container, they will have those same elevated privileges on the host node. For example, if you have, root access in the container and then mount the host OS partition, you will also have root access on that partition, and therefore will be able to edit system files. This would allow you to enable services or change user configuration and passwords.

Default Ports

Root capabilities are required to listen on ports below 1024. To listen to a port below 1024, the user ID must be set to 0 (root), and the container must be created with the NET_BIND_SERVICE capability. For production deployments, we recommend running containers with a non-zero user ID. The default ports for the new installs are as follows:

| Port | Traffic | Previous Port Assignment |

|---|---|---|

| 8008 | Web transport | 80 |

| 1443 | Web transport over TLS | 443 |

| 1943 | SEMP over TLS | 843 |

Docker

To avoid allowing elevated privileges within a container, we recommend the following docker create settings:

| Option | Description |

|---|---|

--privileged=false |

This option denies extended privileges to the container. While this option can mean different things on different systems, it should restrict the ability to modify host files, devices, and network stack. In OpenShift this setting corresponds to the |

--security-opt no-new-privileges |

Use this option to prevent the container processes from gaining additional privileges (for example, using sudo to gain higher permissions within the container). |

Kubernetes

The Kubernetes documentation explains in detail how to properly set up your security context.

We recommend the following settings:

apiVersion: v1

...

spec:

securityContext:

runAsUser: [non zero]

runAsGroup: [non zero]

fsGroup: [non zero]

...

containers:

...

securityContext:

privileged: false

allowPrivilegeEscalation: false

OpenShift

The Openshift restricted SCC implements the Kubernetes security context settings list above and more. Running your containers with the Openshift restricted SCC should be sufficient.

Bootstrapping the Software Event Broker

Once the container has been configured to deploy without elevated privileges, sensitive information such as passwords and certificates must also be protected. This can be done by bootstrapping the software event broker during container creation.

Configuration keys are the bootstrapping tool for the software event broker. For configuring sensitive information, you can use secrets. A secret is a mechanism used by automated deployment tools (for example, Kubernetes) to store and transfer sensitive data to a host and make it available inside a container running on that host. Secrets are created in the controller application and then shared with the hosts that need them when the containers are deployed. To implement secrets, you create a secret (password or private key) and then attach the secret to your container. The automation environment then transfers the secret data securely to the instance and inserts it in the container through a file in a tmpfs (that is, in RAM, not on disk). Finally, a configuration key points to the location of the secret data inside the container.

Solace Software Event Brokers support secrets for:

- Redundancy group passwords, server certificates, and CLI user passwords

- Anything else required to get a broker to the point where it can be managed via the Solace Event Broker CLI or Broker Manager (over TLS)

For more information on using secrets in the software event broker, see Configuring Secrets.

Solace Event Broker Services

In Solace Cloud, all the above-mentioned security concerns are addressed by a managed service (event broker service) as well as security-minded integration into cloud provider environments and services. For more information, see Solace Cloud security.

Securing Data at Rest

If the network or host is compromised, a proper level of protection for data at rest might well be the last line of defense against unauthorized data access. As discussed previously, if you are using TLS on the messaging layer, data is decrypted as it passes through the broker; this also means it is stored unencrypted in non-volatile disks by the event broker. For this reason, we recommend using self-encrypting disks.

Solace Appliance Event Brokers

For high-availability (HA) pairs, all persistent data is stored on the attached SAN, and configuration and logs are stored in internal redundant solid-state drives. The HA pair can only connect to one SAN at a time; this means that a complete SAN failure would trigger a Disaster Recover (DR) event. If this setup is not suitable for your enterprise HA and DR strategy for applications, then SAN replication with data center and SAN failover in the fibre channel infrastructure might be required. Keep in mind that if you are using SAN disk encryption, the appliance event brokers must be able to mount and read/write to SAN partitions in the primary and DR sites.

For a discussion on SAN requirements for an appliance event broker, see External Disk Storage Array Requirements.

Solace Software Event Broker

The software event broker uses a shared-nothing disk strategy for data storage. Each software event broker mounts its own partitions for data configuration and logs. If the data points are sufficiently placed—through mechanisms such as AWS availability zones, spread placement group, or Azure Availability Sets—there should be no further requirement for disk replication beyond the messaging layer of HA and DR. Although software event brokers do not provide disk encryption, they interoperate with standard cloud-provider disk encryption, as well as standard Linux block device encryption.

Solace Event Broker Services

All the security concerns discussed above are addressed by the managed service as well as security-minded integration into cloud provider environments and services. For more information, see the Trust, Compliance & Security Center page.