DMR Subscription Propagation and Data Forwarding

After the administrator has established the relationships between them, the brokers in the event mesh dynamically discover the requirements for routing messages. As subscriptions are added and deleted, those changes are automatically advertised so that the network-wide knowledge of subscriptions is updated as changes occur. No manual intervention is required to keep subscription information current across the event mesh.

This process is somewhat different for nodes within the same cluster versus nodes in different clusters. These differences are described in the sections that follow.

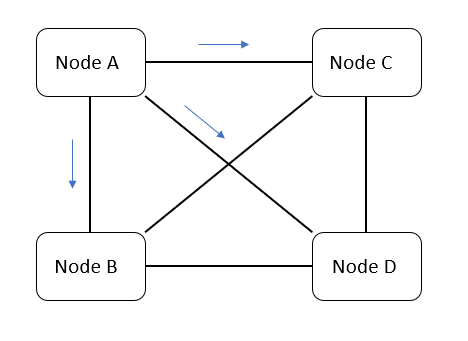

Subscription Propagation Within a Cluster

Each node advertises the subscriptions it needs to its directly connected neighboring nodes. The subscription information for all the nodes' clients is aggregated and then sent to neighboring nodes. The receiving nodes don't further propagate those subscriptions. Since every node is connected to every other node, all the nodes become populated with a complete, non-duplicated set of subscription information for the entire cluster.

For example, in the above diagram, node A advertises a subscription, for instance payments, to nodes B, C, and D. Nodes B, C, and D won't re-transmit the payments subscription across their control channels. Note that each node may be a single broker or an HA pair/group of brokers.

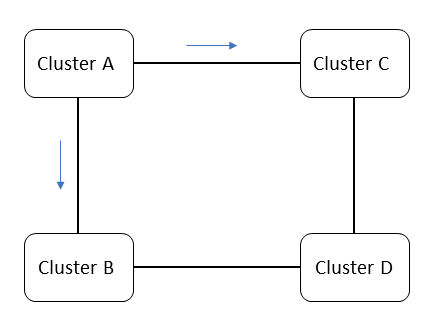

Subscription Propagation Between Clusters

Gateway nodes propagate subscription information between clusters. Before a gateway node sends that information over its external links, it first aggregates the full subscription set for its entire cluster.

For example, in the above diagram, cluster A advertises a subscription, payments, to clusters B and C. Cluster B and Cluster C won't re-transmit the payments subscription across their control channels. Therefore Cluster D does not receive the payments subscription.

Subscription Persistence

Each event broker in a node persists its own Guaranteed subscriptions, but not those propagated to it by other nodes in the event mesh. When subscriptions propagate to an HA node, both brokers of that node learn of the subscriptions, regardless of which of the mates in the HA pair is currently active. Both brokers stay up-to-date with the overall network subscription set at the same time.

In the case of a broker failure, that broker's HA mate continues to provide service for the local node. There is no loss of subscription state or messaging service. When the failed broker restarts, it first resynchronizes its subscription database with adjacent nodes and its own HA mate before providing service.

If a failed broker has no HA mate, or if that mate has also failed, the entire DMR node has failed and its local clients do not have service. However, Guaranteed messages that were destined to be routed to that node continue to be enqueued on its neighboring nodes, and will be delivered to clients once the failed node is restarted. There is no loss of Guaranteed messages. However, Direct messages published while the node is non-operational are lost.

Subscription Limits

The number of subscriptions an event broker can keep track of is limited by its subscription capacity. In an event mesh that has event brokers with differing subscription capacities, the subscription propagation in the event mesh as a whole is limited by the node with the lowest subscription capacity.

To avoid problems with mixed subscription capacities, we recommend that all event brokers in the event mesh have the same subscription capacity.

For more information, see Subscription Capacity.

You can design your topology such that event brokers with lower subscription capacities are isolated from higher-subscription portions of the event mesh. Alternatively, you can implement subscription monitoring to ensure your event mesh stays within its subscription limit.

Prevention of Subscription Propagation Race Conditions

If you're doing Request/Reply messaging with DMR, you must use the Request/Reply methods of the Solace Messaging APIs to prevent possible race conditions associated with subscription propagation. More specifically, you must use the built-in Request/Reply topic (an internal P2P topic by default). If you set the ReplyTo to a custom value, there will be subscription delays and the possibility of a subscription propagation race condition.

For more information on Request/Reply messaging, see

For more information on Solace Messaging APIs, see Solace Messaging APIs.

Data Forwarding

As with subscription propagation, the mechanism for data forwarding is slightly different within a cluster and between different clusters. However, in either case, the same subscription or queue on different nodes attracts the same messages.

DMR preserves the quality of service for the message as published.

If the same queue names exist across multiple nodes in the event mesh, and a client is publishing to a queue by its name, the message is sent across the mesh to all queues with that same name. If you don't want this behavior, publish to queue-specific subscriptions or ensure that queue names are unique across the mesh.

Within a Cluster

Application messages published by a client are sent over the data channels to directly connected neighboring nodes that have matching subscriptions or queues. Only one copy of a message is sent over the internal link, even if that message is destined for multiple clients or queues. The receiving nodes don't transmit the data any further. Since a message from a source node only traverses a single channel to get to a destination, forwarding loops never exist.

Between Clusters

In this case, clusters send messages to other clusters directly via external links between gateways nodes. When a gateway node receives a message that originated from its own cluster, it forwards the message on its external links, only to the clusters that have matching subscriptions or queues. If a gateway node receives a message that originated from a different cluster, it forwards that message on its internal links, only to the nodes in its cluster that have matching subscriptions or queues. Only one copy of a message travels over an external link, even if that message is destined for multiple clients or queues.

Summary of Data Flow in an Event Mesh

DMR internal links are always used in a full mesh so that each node communicates directly with all the others. Every node in an internal DMR cluster exchanges subscriptions with every other node. Data flows only from the publisher-connected node to the consumer-connected node; messages do not flow through intermediary nodes. Client subscriptions are summarized before being shared with neighboring nodes. This means that if node A exchanges subscriptions with node B, and node A has three clients with the same subscription, only one subscription is propagated to node B on the first instance of the subscription, and is removed only after the last client removes its subscription. This minimizes the amount of subscription churn seen by the neighboring nodes. Only one copy of each event message goes across the internal link, to be fanned out on the destination node.

DMR external links are used between internally-meshed DMR clusters.